What Will Happen to Our Heads.

I’ll be honest - in recent months, far too often, I feel an inner resistance, almost a physical pain, when reading yet another post about AI. And why “pain”? It seems to be my organic reaction to the ignorance that is flooding us, to the lack of ethics, to the manipulation.

Recently I also came across a post by someone who compared what’s happening around AI to a cult - and it’s hard to disagree with that statement. The thing about a cult is that it strips its followers of sober, rational thinking, promoting selective use of facts so they legitimise the subject of the cult.

It’s also enough to read, listen to, or watch the statements of Mr. Altman, for instance, to quickly reach the conclusion that modern AI has long since intellectually surpassed some people.

On top of that, read about how OpenAI and Google have already removed most safety barriers from their models, handing them on a silver platter to the Pentagon - ready to be used in ways that raise serious ethical questions. Anthropic, as of today, is holding firm - but for how long?

And then there’s another dimension entirely. Look at the patterns of how people actually use LLMs - pouring out their most intimate thoughts, fears, secrets, decisions. We are voluntarily surrendering what we have left in our heads, without a fight, to systems owned by corporations whose primary obligation is to their shareholders. On a side note - congratulations to those who do so.

We can also add the hordes of experts who have appeared like mushrooms after the rain - which, by the way, reminds me of the situation from a few years ago, when the hot topics were NFTs, blockchain, crypto, Web3, etc., etc. But I can still understand this phenomenon of the expert boom - that someone wants to make money off it, because the product is being intensively promoted on all fronts, and all you need is a bit of confidence to ride the wave of hysteria, declare yourself an expert, and pump the bubble… I can understand that - you’ve got to make a living somehow.

What I cannot understand - and it was the same with NFTs and Web3 - is building an idealistic vision with nothing behind it, while taking no responsibility for your own words.

Then somewhere we also have the business people who have pounced on AI - as we say in Polish - jak szczerbaty na suchara - like a gap-toothed man on a dry biscuit (Like a kid in a candy store/Like a moth to a flame). It is, in the end, sad - because history records a whole host of cases where short-sightedness and the lust for quick profits, while simultaneously ignoring the human costs of those changes and the disrupted balance, was eternally and always doomed to catastrophe in the long run.

Yes, yes - LLMs of all kinds are here and will remain in one form or another, though surely the form will be different in a few years - but for the love of God, that’s not the problem. In fact, AI itself is not the problem at all - the problem is what will most likely happen to the human being, and specifically to their head.

About Intelligence

And it’s about that last point I wanted to talk today… Specifically, about Intelligence.

If we accept that as humans, evolution brought us to our current form over a process lasting approximately 7 million years, it is unrealistic to expect drastic changes in how we function, in how that 1.5 kg of gelatinous substance in our skulls operates. It’s true that the process of evolution continues, but… it is a process, to put it mildly, somewhat spread out over time.

In the last year, several research studies related to cognitive science and the impact of AI have appeared. We also have earlier research related to, for example, the Google Effect, etc. We should expect that more such studies will follow - we just have to hope they maintain their scientific objectivity and are not sponsored by the creators of LLMs. You will find more information at the end of this article.

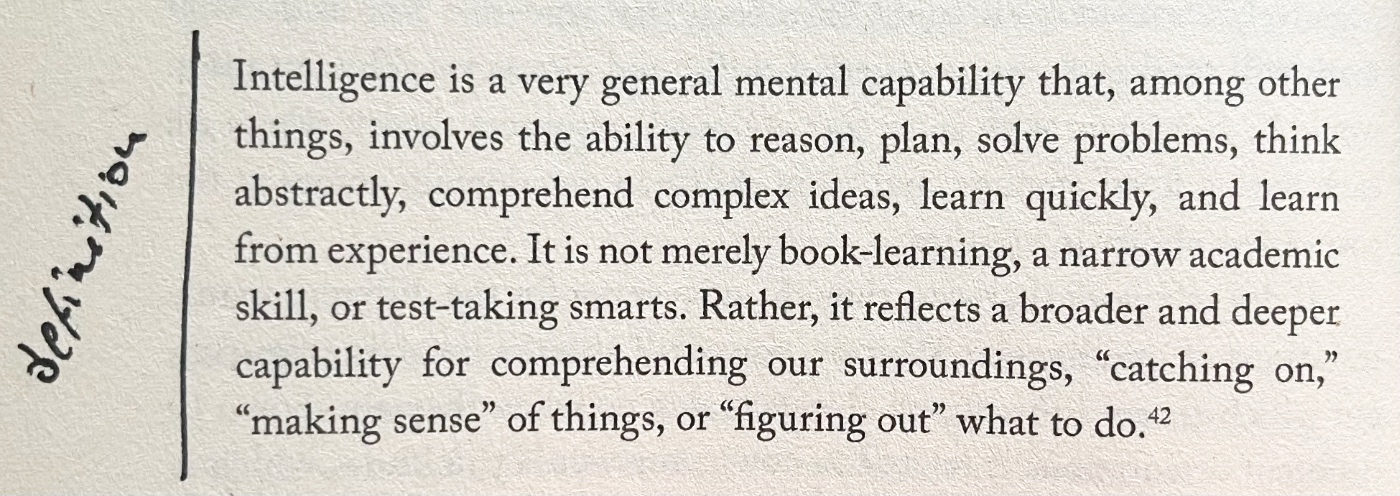

So let’s start with a definition - I found this in the brilliant book The Human Mind by Paul Bloom.

Intelligence and Memory

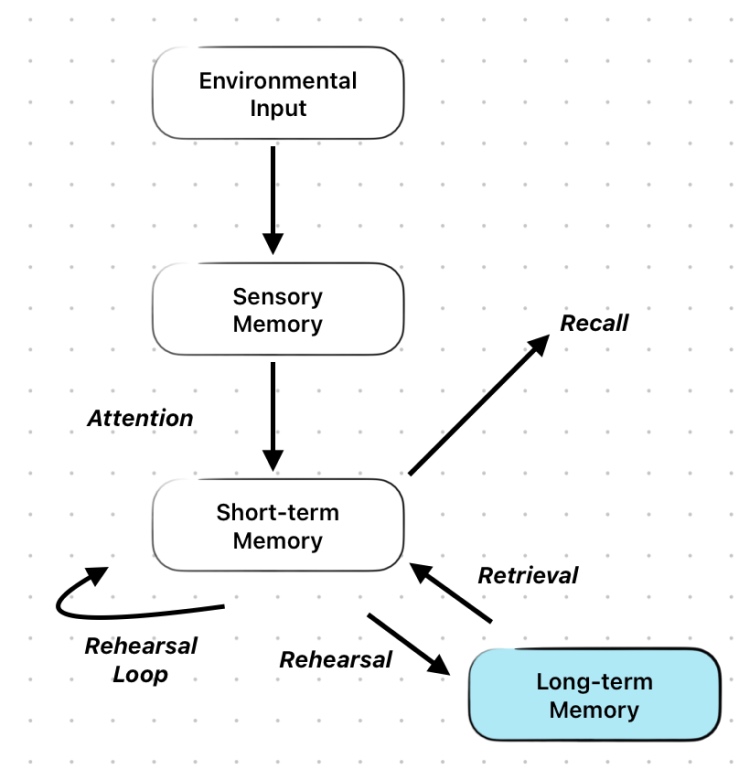

On top of all this, one fundamental thing must be added. Intelligence, our intelligence, needs memory to do its work, and it looks roughly like this:

- Human intelligence is tied to memory - without memory, it cannot develop.

- The memory that matters is long-term memory. It is thanks to it, or more precisely, based on the information retrieved from it, that we are able to build judgments, carry out analysis, etc.

- For long-term memory to be shaped, for information to actually be stored there, that information must undergo deeper thought processes - our brain must be genuinely engaged.

And all of this unfortunately comes down to one thing: to develop, or even just to maintain in proper condition what we already have in our heads - we must use those heads of ours. And we’re talking here about real use, the kind that triggers deeper thought processes.

This is critical and could explain why AI, in the case of people who already possess knowledge in a given domain, can accelerate their work. In the case of everyone else - well, they aren’t able to evaluate the quality of the generated content anyway, and the copy-and-paste mechanism does not contribute to the development of intelligence in any way.

In both cases, passive activities or those that don’t engage deeper thought processes related to AI - one can confidently say - will in the long run lead to the degradation of intellectual skills already possessed, or will have no impact on their development.

You can imagine this mechanism as being similar to watching videos of a fit, muscular person exercising while expecting your own fitness, strength, and muscles to magically grow. They won’t - even if you watched those videos 24 hours a day.

Taking all of the above into account, the mechanism associated with using AI doesn’t fill me with particular optimism. Let’s not kid ourselves - formulating prompts for AI is, in its purest form, outsourcing your own thought processes. Our patience to verify generated content won’t last long, and on top of that we’ll add time pressure - and such pressure appears, because if AI is supposed to make us more productive… and then what do we have?

Exactly - we end up fully dependent on AI. Because why understand something when you can just tell it to do it. Because why verify and check - it must be right, because… AI says so.

It’s easy for me to imagine a situation where teams begin to intensively use AI and sooner or later lose contact with the subject of their own creation, ceasing to understand how what they “supposedly create” actually works. Operating in any domain requires not only theoretical knowledge but above all practical knowledge - and this applies to absolutely every field.

To write, you must write, work with the material of words, and yourself be the source of thoughts and words. To discuss, you must learn to formulate those thoughts, think critically. And when creating an image or sound, you must also have hands-on experience with that material. All of this is needed just to know what to ask for in that damn prompt. I don’t even need to mention programming, because I experience this myself. In half a day, AI can produce a mass of code that you are physically unable to comprehend.

I don’t know whether the current situation doesn’t somewhat resemble what the Western world did to itself by introducing highly processed food: because the idea was more food and cheaper. And there was more and cheaper - nobody had to go hungry… but the other side of the coin: the US - 75% of the population overweight, the UK - 64% - and still rising.

Will a similar mechanism play out with AI? To make life easier, guided by the principle (of which there will be less and less) that life should be light and pleasant, so overworking your own head is not advisable - will we create a society of idiots unable to make even the simplest decision on their own? I don’t know…

What Can Be Done?

The answer to this question seems obvious. Diligently, with persistence and determination - put AI aside and let your head work on its own. It won’t always and everywhere be practical - there are things worth automating - but it’s worth spending some effort and being mindful enough to know when to stop relying on the tool. I won’t be surprised if this year brings a flood of experts who will be blessed with the knowledge of how to balance the work of teams using AI while simultaneously minimising cognitive decline and maintaining their expertise.

But you don’t need to be an expert. It’s enough to look critically at what we do, how we do it, and how much. And to absolutely not always take shortcuts - to allow yourself, sometimes, to immerse yourself fully in the subject matter at hand.

Selected Research

-

MIT Media Lab study (2025) - ChatGPT users showed up to 55% reduced brain connectivity and 83.3% couldn’t recall passages from essays they’d just written.

-

Microsoft/CMU CHI 2025 study (319 workers, 936 examples) - 40% of AI-assisted tasks showed zero critical thinking.

-

Gerlich 2025 study (666 UK participants) - significant negative correlation (r = -0.68) between AI usage and critical thinking, with ages 17–25 most affected.

-

Wharton School study (~1,000 students) - 48% better performance with AI but worse performance than controls when AI was removed - the “learning paradox”.

-

The Google Effect (Sparrow et al., 2011) - earlier research showing people remember less when they know information is digitally accessible.